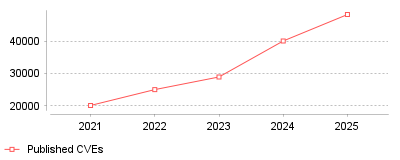

| Year | Published CVEs |

|---|---|

| 2021 | 20161 |

| 2022 | 25059 |

| 2023 | 28961 |

| 2024 | 40077 |

| 2025 | 48244 |

Over the past five years, the volume of published Common Vulnerabilities and Exposures (CVEs) has skyrocketed, culminating in a record-breaking 48,244 CVEs in 2025 alone [1]. Concurrently, the median time from a vulnerability's disclosure to its first observed exploit has compressed from 84 days in 2021 to practically zero in 2025, with many exploits now weaponized before official patches are released [2].

For the cybersecurity industry, this data signals the end of the traditional "patching grace period" that security teams once relied upon. Threat actors are now heavily utilizing automated, AI-driven tools to scan for and exploit weaknesses the exact moment they are publicized, or even beforehand [2]. On a micro level, organizational security teams are facing severe burnout and insurmountable backlogs, as high and critical severity application vulnerabilities still take an average of 54.81 days to manually remediate [3]. On a macro level, this vast discrepancy between a near-zero time-to-exploit and a roughly two-month Mean Time to Remediate (MTTR) is driving a massive industry shift toward continuous Penetration Testing as a Service (PTaaS) [4].

The widening gap between attacker speed and defender response times directly correlates to the increasing frequency, success rate, and financial impact of data breaches. If organizations continue to rely exclusively on annual penetration tests and periodic vulnerability scans, they will remain completely blind to critical, zero-day exposures for months at a time [5]. Understanding this trend is essential for security leaders who must urgently justify transitioning their budgets toward continuous validation, real-time threat intelligence, and AI-augmented defensive tooling.

The exponential rise in CVE volumes is largely a byproduct of an expanding digital attack surface, which has been accelerated by rapid cloud adoption, complex software supply chains, and the proliferation of open APIs [6]. Furthermore, security researchers and bug bounty programs are highly incentivized today, leading to more rigorous scrutiny and public discovery of these underlying flaws [7]. The compression of the exploitation timeline is heavily fueled by the industrialization of cybercrime and the integration of generative AI into offensive operations. Adversaries can now deploy agentic AI to autonomously discover, verify, and weaponize vulnerabilities across vast networks without human intervention, effectively eliminating the time it takes to launch a successful attack [8].

The intersection of skyrocketing vulnerability volumes and zero-day exploitation speeds represents a fundamental, irreversible shift in the global cyber threat landscape. Organizations can no longer rely on reactive, scheduled patching cadences, as the traditional window for remediation has completely evaporated. The most prominent takeaway is that adopting continuous, automated penetration testing and active exposure management is now a mandatory baseline requirement for survival in this highly weaponized digital ecosystem.

The global security testing sector reached $14.5 billion in 2024. MarketsandMarkets projects this market will grow to $43.9 billion by 2029, representing a 24.7% compound annual growth rate [1]. Enterprise budgets reflect a massive shift toward proactive defense mechanisms. The average cost of a data breach climbed to $4.88 million globally in 2024, representing a 10% year-over-year increase [2]. This financial exposure forces chief information security officers to justify heavy capital allocation toward cybersecurity, privacy & compliance software. Software release cycles dictate this spending acceleration. Development teams push code updates daily, expanding the application attack surface. Organizations deploy vulnerability scanning & pen testing tools to identify critical flaws before malicious actors exploit them in production environments. Application security testing commands the largest portion of this budget, driven by the immediate necessity to secure web interfaces, mobile applications, and backend programming interfaces.

Specific testing disciplines demonstrate varied growth trajectories. The penetration testing segment alone generated $2.45 billion in 2024 and will exceed $6 billion by the early 2030s [3]. North America controls the largest regional share of this expenditure, fueled by strict regulatory oversight and high threat awareness among corporate boards. Companies face a talent shortage regarding ethical hacking skills. This labor deficit drives demand for penetration testing as a service, allowing internal teams to outsource validation exercises rather than maintaining expensive internal red teams. The transition from reactive patching to continuous validation fundamentally alters how procurement departments evaluate security investments.

Federal regulators reshaped cyber accountability standards in 2023. The Securities and Exchange Commission finalized strict disclosure rules for public entities. Companies must report material cyber incidents on Form 8-K within four business days of determining materiality [4]. The timeline begins at the exact moment of materiality determination, leaving security teams zero margin for hesitation. Furthermore, Regulation S-K Item 106 requires annual disclosures detailing how organizations assess, identify, and manage material cyber risks. The SEC no longer accepts vague assurances. Enforcement actions throughout 2024 proved that regulators demand documented, systematic risk management processes backed by continuous testing.

State authorities enforce equally severe frameworks. The New York Department of Financial Services amended Part 500, introducing aggressive requirements for Class A companies. The state defines Class A companies as entities generating over $20 million in New York revenue that also report either $1 billion in gross annual revenue or employ 2,000 workers [5]. These designated firms must conduct independent security audits annually and implement strict access controls. Due to these overlapping jurisdictions, vulnerability scanning & pen testing tools for insurance agents operating in New York must meet distinct compliance thresholds. Part 500 demands multi-factor authentication across all remote access points and 72-hour notification windows for security events. Regulators classify unsuccessful attacks as reportable incidents if they demonstrate significant systemic risk.

Compliance frameworks drive predictable operational costs. System and Organization Controls 2 validation requires significant capital outlays for technology firms. A standard SOC 2 compliance initiative costs between $45,000 and $70,000 for mid-market organizations [6]. This total includes compliance platform subscriptions, implementation consulting, and the final certified public accountant audit. Enterprise organizations with complex digital architectures spend upward of $350,000 during their first year of SOC 2 compliance [7]. External penetration testing represents a mandatory line item in these budgets. Auditors require simulated attacks to validate internal control configurations.

Testing complexity determines exact pricing models. A standard network penetration test costs between $4,000 and $14,000 [8]. Web application testing runs slightly higher, averaging $6,000 to $15,000 per engagement. Cloud infrastructure assessments demand specific architectural knowledge, pushing costs between $5,000 and $50,000 depending on server volume and service configuration [9]. Organizations evaluating vulnerability scanning & pen testing tools for SaaS companies must factor in these annual retesting requirements. A black box test, where engineers possess no prior system knowledge, requires significant labor hours and commands higher baseline fees. Grey box assessments offer a practical middle ground, providing testers with partial system access to accelerate vulnerability discovery while maintaining realistic external attack scenarios.

Security engineers face an unsustainable math problem. The National Vulnerability Database recorded 40,289 Common Vulnerabilities and Exposures in 2024 [10]. This represents a 39% volume increase from the previous year. Teams cannot patch systems fast enough to mitigate this threat volume. Edgescan data shows the Mean Time To Remediate critical severity vulnerabilities sits at 65 days across full stack architectures [11]. Attackers move much faster. Current metrics indicate that 23.6% of known vulnerabilities face active exploitation on or before their public disclosure date [10]. Defenders operate at a structural disadvantage when remediation requires two months but exploitation occurs immediately.

Prioritization mechanisms attempt to filter this noise. The Common Vulnerability Scoring System assigns base metrics to newly discovered flaws. Tenable research indicates a massive discrepancy between CVSS scores and actual risk. Only 3% of all published vulnerabilities frequently result in impactful exposure [10]. Teams relying purely on high CVSS numbers waste labor hours patching theoretical flaws while ignoring less obvious, highly exploitable paths. The Exploit Prediction Scoring System emerged as a superior metric. EPSS models the actual probability of a vulnerability experiencing exploitation in the wild. This shift allows security operations centers to concentrate resources on threats demonstrating proven attacker interest.

Alert fatigue destroys analyst efficiency. Traditional scanning utilities generate massive volumes of false positive reports. Static application security testing tools parse source code without executing it, identifying exact line-of-code locations for suspected flaws. These tools routinely flag harmless code patterns as critical risks. Cycode research shows that security engineers waste 30% to 40% of their operational hours investigating non-issues generated by traditional scanners [12]. When organizations deploy multiple overlapping scanning tools, the false positive rate compounds. Different vendors assign varying risk scores to the exact same code pattern, forcing analysts into manual deduplication tasks.

Cross-ecosystem confusion causes many of these errors. Scanners frequently mismatch vulnerabilities across different programming languages with similar naming conventions. In 2023, the Grype scanning utility erroneously flagged Go applications using specific protobuf libraries as vulnerable to C++ flaws [13]. Anchore engineers identified 44 distinct instances of this exact matching error affecting major projects like Kubernetes and Prometheus. The Common Platform Enumeration system lacks the granularity to distinguish between identically named software implementations. Tool vendors recently shifted toward the GitHub Advisory Database to improve matching accuracy. This architectural change reduced false positive matches by over 2,000 instances in standard test sets while generating only 11 false negatives [13]. Management teams evaluating vulnerability scanning & pen testing tools for consulting firms demand low false positive rates to protect their analysts' billable hours.

Third-party software components present severe supply chain risks. Ransomware syndicates actively target external service vendors to achieve maximum blast radius. In May 2023, the Cl0p ransomware operation exploited CVE-2023-34362. This SQL injection vulnerability resided in Progress Software's MOVEit Transfer tool [14]. The attackers launched their campaign before the vendor disclosed the flaw. The breach cascaded across 2,700 organizations, exposing 93 million individual records and causing an estimated $12 billion in total damages [10]. This incident exposed the utter failure of perimeter-focused defense models.

Professional services agencies face similar indirect exposure. Threat actors compromise marketing platforms to extract high-value client lists. The ShinyHunters hacking group breached the analytics provider Mixpanel, leading to extortion demands against major downstream clients [15]. Supply chain compromises circumvent internal security controls. Procurement managers must demand strict third-party audits. Vendors implement vulnerability scanning & pen testing tools for digital marketing agencies specifically to prove data isolation between competing clients. Every external vendor introduces inherited risk. Network administrators must scan these connections continuously rather than relying on annual security questionnaires.

Gartner introduced Continuous Threat Exposure Management in 2022 to fix broken vulnerability workflows. Analysts define CTEM as a systematic process to continually evaluate the accessibility, exposure, and exploitability of digital assets [16]. Traditional management programs fail because they measure success by patch volume rather than risk reduction. CTEM forces teams to align their remediation efforts with actual threat vectors. The framework operates on a five-stage cycle: scoping, discovery, prioritization, validation, and mobilization [17]. Organizations adopting this specific model realize significant operational improvements. Gartner predicts that enterprises prioritizing investments based on a CTEM program will experience a two-thirds reduction in security breaches by 2026 [16].

Exposure assessment platforms merge distinct testing methodologies to support this framework. Breach and Attack Simulation tools generate simulated adversary traffic to test active defense configurations. Interactive application security testing observes software behavior during runtime, combining the strengths of static code analysis and dynamic web testing. This continuous validation loop proves exactly which alerts matter. If a scanner detects a critical flaw, but the attack simulation cannot reach the vulnerable server due to strict network segmentation, the actual risk drops to zero. Contextual intelligence prevents engineers from pursuing dead-end alerts. The goal shifts from patching everything to fixing only the exposures that grant attackers immediate network access.

Software composition analysis will dominate near-term purchasing decisions. Open-source libraries power modern development, but they introduce invisible dependencies. Developers download external packages without verifying the underlying code integrity. When a flaw emerges in a popular library, organizations spend weeks simply determining if their applications execute the vulnerable code. Future scanning platforms must generate accurate software bills of materials automatically during the build process.

Artificial intelligence alters the testing dynamic on both sides. Threat actors deploy large language models to automate vulnerability discovery and generate polymorphic exploit code. Defenders deploy similar neural networks to categorize alert severity and draft remediation scripts. Security vendors integrate predictive scoring into their dashboards to forecast which Common Vulnerabilities and Exposures will experience active exploitation within thirty days. Despite these automated advancements, human intelligence remains a mandatory component of the validation process. Automated tools excel at finding known patterns, but human penetration testers discover novel business logic flaws that software cannot comprehend. The most effective security programs will integrate continuous automated scanning with targeted human adversarial testing to maintain organizational resilience against evolving digital threats.