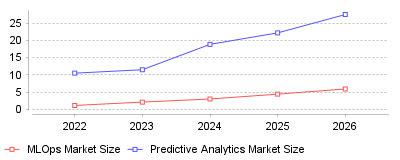

Recent market intelligence reveals an explosive growth trajectory in both the Machine Learning Operations (MLOps) and Predictive Analytics sectors, fundamentally reshaping how enterprises deploy artificial intelligence. The data highlights a massive transition from experimental machine learning projects to scaled, production-ready cloud environments, with market sizes projected to multiply rapidly

| Year | MLOps Market Size | Predictive Analytics Market Size |

|---|---|---|

| 2022 | 1.1 | 10.5 |

| 2023 | 2.08 | 11.5 |

| 2024 | 2.98 | 18.89 |

| 2025 | 4.39 | 22.22 |

| 2026 | 5.9 | 27.56 |

This data illustrates a compounding expansion in the adoption of Machine Learning Operations (MLOps) and Predictive Analytics platforms globally, transitioning from billions to tens of billions in market valuation over a tight five-year window. The continuous year-over-year market size growth reflects a critical pivot where organizations are no longer just building analytical models, but are actively operationalizing them in secure, scalable, and automated cloud environments [1].

At a micro level, individual enterprises are rapidly abandoning manual, siloed data science workflows in favor of integrated end-to-end solutions that merge software DevOps principles with machine learning pipelines [2]. This shift requires significant internal restructuring as companies demand faster model deployment, stringent regulatory compliance, and robust model governance to ensure reliability [3]. On a macro industry level, this signifies the maturation of the artificial intelligence sector into an industrialized ecosystem where cloud-based platforms and service providers capture immense financial value. Furthermore, the convergence of MLOps and Predictive Analytics means that heavily regulated industries like banking, healthcare, and retail can now leverage real-time, data-driven insights to drastically reduce operational friction and mitigate institutional risk [4].

The industrialization of these platforms is crucial because it bridges the massive gap between theoretical artificial intelligence research and tangible, production-level business value. Without robust MLOps frameworks, the vast majority of machine learning models would remain stuck in the experimental phase, plagued by data drift, compliance issues, and computational scaling bottlenecks [5]. By solving these deployment challenges, these platforms act as the foundational infrastructure that will allow the global economy to fully harness the productivity gains promised by the recent AI boom, creating measurable return on investment and distinct competitive advantages [6].

The exponential explosion of generative AI and deep learning research has created an urgent, unprecedented demand for enterprise-grade infrastructure capable of handling massive compute limits and complex data requirements [7]. Concurrently, the global transition to cloud computing architectures has democratized access to scalable resources, allowing even small and medium-sized enterprises to bypass hefty initial hardware investments and adopt these predictive tools [8]. Furthermore, increasingly stringent data privacy regulations worldwide have forced companies to adopt formal governance and monitoring frameworks to ensure their algorithms remain compliant, explainable, and unbiased. One can also strongly speculate that the fierce competitive pressure in data-heavy sectors like finance and e-commerce has made predictive accuracy a matter of literal corporate survival, compelling immediate financial commitments to operationalize machine learning workflows [9].

The explosive growth of MLOps and Predictive Analytics platforms marks the definitive transition of artificial intelligence from a laboratory novelty to an essential core business infrastructure. As cloud integration deepens and workflow automation capabilities expand, these data platforms will quickly become as indispensable to modern enterprises as traditional software development toolchains. Ultimately, the prominent takeaway is that future market leadership will belong not merely to those who build the smartest algorithms, but to those who possess the operational infrastructure to efficiently scale, monitor, and govern them in real-world environments [10].

Eighty-eight percent of organizations regularly use artificial intelligence in at least one department. Only six percent report an earnings contribution exceeding five percent [1]. This execution failure defines the predictive analytics sector in 2025. Corporate buyers have largely abandoned software pilot programs. They now attempt to force models into daily production. The analytics market reached $17.3 billion in 2024 revenue [2]. Analysts project this category will hit $92.7 billion by 2034. Growth forecasts indicate a 17.2 percent compound rate during this decade.

Vendors face mounting pressure to prove operational value. Chief financial officers demand immediate cost reductions. Platform developers responded by bundling data engineering and model evaluation features. Buyers want unified control over data ingestion and model deployment. Organizations that fully integrate AI into workflows are rare. Just one percent of executives classify their companies as mature on the deployment spectrum [3]. Data silos and a lack of engineering talent throttle expansion.

Many enterprises spent 2024 rushing to implement pilot programs. They soon realized their data infrastructure could not support production models. Data silos prevent algorithms from accessing the necessary historical context. A model trained on incomplete marketing data cannot accurately predict sales outcomes. Engineering talent remains scarce and expensive. Companies struggle to hire data scientists capable of translating business problems into mathematical functions. This talent shortage drives the demand for low-code analytics platforms. Business analysts can now build predictive models without writing code. However, these simplified tools often lack the custom controls required for complex enterprise use cases.

Analysts project the total data analytics market will jump to $495.8 billion by 2034 [4]. The market encompasses software, hardware, and services. Local deployments still command 64 percent of the global share [5]. Cloud adoption accelerates rapidly. Large banks retain local servers for security purposes regardless of cloud benefits. A significant hurdle remains user adoption. Twenty-eight percent of enterprises cite a lack of intuitive tools as their primary barrier to deployment.

Snowflake posted $943.3 million in Q4 product revenue for fiscal 2025. This figure represents 28 percent year-over-year growth [6]. The company maintained a 126 percent revenue retention rate. Snowflake expanded its footprint by integrating OpenAI models into its Cortex platform [7]. The company reported 580 customers spending more than $1 million annually on its product. This metric represents a 27 percent increase from the prior year. Remaining performance obligations reached $6.9 billion. These figures indicate that large enterprises are committing to multi-year contracts rather than monthly subscriptions.

Databricks secured a leadership position in the 2025 Gartner Magic Quadrant for Data Science and Machine Learning Platforms [8]. The company countered Snowflake by expanding its open-source integrations. Databricks champions a lakehouse architecture. This structure merges the flexibility of data lakes with the reliability of data warehouses. Gartner praised Databricks for allowing customers to govern models hosted outside of its platform. This flexibility appeals to chief information officers managing hybrid cloud environments.

DataRobot secured its Leader status by focusing heavily on strict governance and safety protocols [9]. Buyers prioritize platforms that track data lineage. Regulators increasingly demand proof of how a model arrived at a specific decision. Black-box algorithms face heavy scrutiny in finance and healthcare. IBM Watsonx targets this exact compliance need [10]. It provides enterprise guardrails for model deployment. AWS uses its massive infrastructure footprint to cross-sell SageMaker to current cloud customers [11]. The platform offers custom evaluation tools that trace the root cause of algorithmic errors.

Alteryx surpassed $1 billion in annual recurring revenue during 2025 [12]. Customers executed over 380 million automated workflows on their platform last year. Dataiku also maintains a strong enterprise presence. Its platform allows marketers to collaborate with data scientists on shared visual pipelines [13]. Technology buyers previously purchased separate tools for data storage, model training, and performance monitoring. They now favor integrated software suites. This consolidation restricts opportunities for niche startups.

Initial compliance with the European Union Artificial Intelligence Act requires heavy capital. Technology providers building regulated systems face quality management costs between EUR 193,000 and EUR 330,000 [14]. Annual maintenance for these frameworks demands an additional EUR 71,400. The European Round Table for Industry corroborated these figures in a joint statement [15].

The legislation establishes a tiered risk framework. Systems presenting minimal risk face no mandatory obligations. Spam filters and game algorithms fall into this category. Limited risk systems simply require transparency notices. Users must know they are interacting with artificial intelligence. High-risk systems face the most aggressive regulatory burden. These include tools used in critical infrastructure, education, and employment screening.

Providers of general-purpose models with systemic risk face additional requirements under Article 55 [16]. They must conduct adversarial testing to expose security vulnerabilities. They must track serious incidents and report them to the AI Office. The legislation takes full effect in stages. General-purpose model rules apply in August 2025. High-risk system regulations begin in August 2026. Non-compliance triggers fines up to 35 million euros or seven percent of annual turnover.

Corporate advisors recognize this regulatory friction as a distinct business opportunity. Management teams actively seek statistical modeling tools designed for consulting practices to audit client algorithms. These platforms evaluate external datasets for hidden bias. They generate compliance reports that satisfy regulatory auditors. Consultants charge premium rates for this evaluation service. Small developers lack the capital to build these internal safeguards. Hospitals hesitate to purchase predictive diagnostic tools from startups that might fail compliance audits.

Industrial machinery rarely breaks down without warning. Sensors detect thermal variations or vibration anomalies weeks before a catastrophic failure occurs. Facility operators use machine learning to process this sensory data. Predictive protocols reduce unplanned downtime by 30 to 50 percent [17]. Organizations transitioning from reactive repairs to predictive schedules lower their maintenance expenditures by 18 to 25 percent.

Commercial heating systems consume 40 to 60 percent of building energy. They represent the single largest maintenance cost center in most facilities [18]. Traditional maintenance relies on calendar schedules. A technician replaces a filter every three months regardless of its actual condition. This approach wastes labor and materials. Alternatively, facilities run equipment until it breaks. This reactive strategy leads to catastrophic failures and tenant displacement.

Predictive analytics changes this operational dynamic entirely. An air unit with a falling temperature differential signals a refrigerant loss [19]. The software generates a traceable alert before the compressor burns out. A chemical plant saved $57,000 annually by fixing 160 air leaks identified by acoustic sensors [20]. Industrial manufacturers lose $50 billion annually to unplanned downtime. Maintenance interventions cost 20 times less than equipment upgrades. Predictive software bridges this massive financial gap.

Building contractors rely heavily on continuous monitoring to scale their operations. Technicians deploy specialized analytics software for commercial climate control to prioritize their repair visits. Machine learning models identify over 95 percent of potential failures before they cause system shutdowns [21]. Connected sensors monitor current draw to detect efficiency drift. A motor drawing excess current in January gets repaired immediately. It does not run inefficiently until an annual audit catches the problem in October.

Property owners constantly chase maximum occupancy. They balance rental rates against vacancy duration. Real estate trusts use regression algorithms to calculate optimal prices. These systems analyze lease data, economic indicators, and foot traffic [22]. The software recommends daily adjustments for available units. RealPage and Yardi dominate this market segment [23].

Property management previously relied on backward-looking data. Landlords checked neighborhood sales to set rent prices. This method fails during volatile economic periods. Rising interest rates and escalating construction costs squeeze profit margins. Managers must anticipate market shifts before they impact portfolio performance. Zillow uses algorithms to estimate home values with a median error rate of 5.9 percent [24]. Commercial operators demand similar precision for apartment complexes.

RealPage's revenue system increased rental yields by 5.4 percent for a major trust. Yardi's RENTmaximizer optimized pricing across 100,000 units for Lincoln Property Company. Property managers must establish pricing guardrails within these platforms. They restrict the algorithm from implementing steep daily fluctuations that might alienate prospective tenants. The system requires constant updates to competitive sets. Leasing agents must understand the algorithmic logic to successfully explain pricing variations to applicants.

Institutional landlords depend on machine learning dashboards built for building administrators to track these metrics across thousands of units. Valuation models provide superior property assessments. These tools incorporate demographic shifts and development plans alongside sales data [25]. Commercial properties also deploy energy systems. Machine learning adjusts indoor temperatures based on weather forecasts and occupancy patterns. This precise control supports sustainability goals and lowers utility expenses.

Google Analytics 4 introduced automated predictive metrics. The software calculates purchase probability, churn risk, and expected revenue for website visitors [26]. It requires a baseline volume of conversion data to activate these features. Marketers push these audience segments directly into Google Ads. The integration bypasses demographic targeting.

Digital marketing faces an attribution crisis. Privacy regulations and browser updates restrict data tracking. Marketers struggle to prove which advertisement actually caused a sale. Predictive analytics solves this problem by modeling customer journeys. Attribution models replace simplistic last-click tracking. These systems determine each touchpoint's exact contribution to a conversion. Companies reallocate their budgets to the highest-performing marketing channels based on these insights.

Behavioral segmentation reaches extreme precision. Legacy marketing relied on demographic categories like age and zip code. Machine learning clusters users based on navigation speed, scroll depth, and interaction frequency. Software vendors like Pecan AI offer forecasting tools for consumer brands [27]. These platforms ingest transaction data and return lifetime value scores. Analysts use these scores to optimize promotional discounts and email outreach.

Advertising firms integrate these forecasts into their daily operations. Account directors use client forecasting tools designed for media buyers to justify budget allocations. B2B firms score incoming leads to prioritize sales outreach. A model analyzes a prospect's company size, recent funding, and web activity. The system assigns a numeric value indicating the likelihood of a closed deal. Sales representatives ignore low-scoring leads and focus entirely on sales targets.

Predictive analytics answers what will happen. Prescriptive analytics dictates what actions to take. The software market is shifting aggressively toward this latter category. Platforms no longer just alert a sales manager about a declining account. They automatically draft a retention email and schedule a phone call. Gartner anticipates a 20 percent increase in prescriptive analytics use over the next two years [28].

Autonomous agents represent the next technical milestone. These programs execute reasoning processes without human intervention. Startups like Cognition AI build agents that write and test software code [29]. Financial institutions deploy portfolio managers that trade equities autonomously. Executives expect employees to surrender a significant portion of their daily tasks to these agents. Sixteen percent of C-suite leaders predict employees will use generative agents for more than 30 percent of their work within a year [30].

Healthcare applications show massive potential. The healthcare analytics market will reach $8.4 billion by 2025 [28]. Models analyze patient histories to predict readmission risks. Hospitals allocate nursing staff based on these algorithmic forecasts. The technology improves patient outcomes while controlling labor costs. Businesses that fail to deploy automated workflows will suffer a competitive disadvantage. Machine learning drives a 20 to 30 percent productivity increase in industries [29].

Venture capital flows heavily toward autonomous applications. AI investment surpassed $100 billion in 2024, featuring 13 funding rounds exceeding $1 billion each. The SaaS model faces disruption. Analysts label the emerging category Services-as-Software. Vendors no longer sell a tool that a human must operate. They sell a digital worker that executes the entire process. The companies that successfully navigate these operational challenges will dominate the next decade of enterprise software.