Customer Analytics & Cohort Analysis Platforms

These are the specialized categories within Customer Analytics & Cohort Analysis Platforms. Looking for something broader? See all Business Intelligence & Analytics Software categories.

How big is your team?

What's your budget situation?

What's your team's technical comfort level?

What's the ONE thing this tool must do well?

Activity Data empowers email marketers with precise engagement metrics, tracking subscriber interactions over the past 30 to 365 days. It aids in crafting targeted re-engagement campaigns, ensuring high ROI and sender reputation protection with its 96%+ accuracy and robust security certifications.

Best for Customer Analytics Tools with Segmentation

Expert Take

ZeroBounce's Activity Data stands out by going beyond basic validation to provide deep, actionable engagement metrics. By identifying exactly which subscribers have opened, clicked, or forwarded emails within the last 30 to 365 days, it enables highly targeted re-engagement campaigns. Its enterprise-grade security, proven 96%+ accuracy, and impressive integration ecosystem make it a robust choice for serious email marketers looking to protect their sender reputation and boost ROI.

Pros

- 96-98% documented verification accuracy

- SOC 2, ISO 27001, and HIPAA certified

- Tracks user engagement up to 365+ days

- Extensive native integrations and SDKs

Cons

- Expensive at higher sending volumes

- Requires minimum purchase of 2,000 credits

- Pricing structure can be confusing

Pros

- 96-98% documented verification accuracy

- SOC 2, ISO 27001, and HIPAA certified

- Tracks user engagement up to 365+ days

- Extensive native integrations and SDKs

Cons

- Expensive at higher sending volumes

- Requires minimum purchase of 2,000 credits

- Pricing structure can be confusing

Expert Take

ZeroBounce's Activity Data stands out by going beyond basic validation to provide deep, actionable engagement metrics. By identifying exactly which subscribers have opened, clicked, or forwarded emails within the last 30 to 365 days, it enables highly targeted re-engagement campaigns. Its enterprise-grade security, proven 96%+ accuracy, and impressive integration ecosystem make it a robust choice for serious email marketers looking to protect their sender reputation and boost ROI.

Mixpanel is a powerful SaaS solution for businesses that want to understand and act on user behavior. It offers detailed product analytics and robust event tracking capabilities, enabling businesses to drive acquisition, growth, and retention. For professionals in the customer analytics industry, Mixpanel provides the tools necessary to segment customers and analyze their behavior in a comprehensive, data-driven manner.

Best for Customer Analytics Tools with Segmentation

Expert Take

Mixpanel excels in providing detailed user analytics and segmentation capabilities, making it a top choice for customer analytics professionals. Its robust event tracking and real-time data features are well-documented, and it maintains strong market credibility through third-party recognition. While it requires some technical expertise, its comprehensive features justify its premium positioning.

Pros

- Powerful event-based analytics

- Generative AI 'Spark' reporting

- Direct Data Warehouse sync

- SOC 2 & HIPAA compliant

- Generous startup program (1yr free)

Cons

- Steep learning curve

- Expensive at high volume

- A/B testing is Enterprise-only

- Complex initial implementation

- Free plan capped at 1M events

Best for teams that are

- Product teams and SaaS companies needing deep user behavior and retention analysis

- Startups to enterprises wanting granular event tracking and funnel analysis

- Companies focused on understanding specific user journeys within apps or websites

Skip if

- Marketers primarily seeking high-level traffic source and acquisition metrics

- Non-technical users who struggle with steep learning curves for event-based tracking

- Those needing a free, simple tool for basic pageview tracking without complex setup

Best for teams that are

- Product teams and SaaS companies needing deep user behavior and retention analysis

- Startups to enterprises wanting granular event tracking and funnel analysis

- Companies focused on understanding specific user journeys within apps or websites

Skip if

- Marketers primarily seeking high-level traffic source and acquisition metrics

- Non-technical users who struggle with steep learning curves for event-based tracking

- Those needing a free, simple tool for basic pageview tracking without complex setup

Pros

- Powerful event-based analytics

- Generative AI 'Spark' reporting

- Direct Data Warehouse sync

- SOC 2 & HIPAA compliant

- Generous startup program (1yr free)

Cons

- Steep learning curve

- Expensive at high volume

- A/B testing is Enterprise-only

- Complex initial implementation

- Free plan capped at 1M events

Expert Take

Mixpanel excels in providing detailed user analytics and segmentation capabilities, making it a top choice for customer analytics professionals. Its robust event tracking and real-time data features are well-documented, and it maintains strong market credibility through third-party recognition. While it requires some technical expertise, its comprehensive features justify its premium positioning.

Pendo Predict is an AI-powered churn prediction software specifically designed for businesses that need to analyze usage patterns, frequency, and engagement data to foresee customer attrition. It integrates AI models directly into the software, providing industry professionals with in-depth insights to prevent customer churn and enhance retention.

Best for Churn Analytics Tools

Expert Take

Pendo Predict is recognized for its advanced AI-powered capabilities in churn prediction, offering deep insights into customer behavior. It integrates seamlessly with existing platforms, providing valuable analytics that enhance customer retention. Despite its complexity and cost for smaller businesses, it remains a top choice for enterprises seeking to reduce churn.

Pros

- No data science team required

- Explainable AI (reasons for risk)

- Direct Salesforce & HubSpot sync

- Continuous model retraining

- Automated churn & upsell signals

Cons

- Opaque, expensive MAU-based pricing

- Steep platform learning curve

- Requires rigorous feature tagging

- Renewal price uplifts reported

- Complex initial setup

Best for teams that are

- Product-led SaaS companies focused on usage-based retention

- Product and CS teams wanting AI insights without data scientists

- Organizations already using Pendo for product analytics

Skip if

- Non-digital product businesses (requires software usage data)

- Companies needing purely financial or contract-based churn analysis

- Early-stage startups sensitive to higher pricing tiers

Best for teams that are

- Product-led SaaS companies focused on usage-based retention

- Product and CS teams wanting AI insights without data scientists

- Organizations already using Pendo for product analytics

Skip if

- Non-digital product businesses (requires software usage data)

- Companies needing purely financial or contract-based churn analysis

- Early-stage startups sensitive to higher pricing tiers

Pros

- No data science team required

- Explainable AI (reasons for risk)

- Direct Salesforce & HubSpot sync

- Continuous model retraining

- Automated churn & upsell signals

Cons

- Opaque, expensive MAU-based pricing

- Steep platform learning curve

- Requires rigorous feature tagging

- Renewal price uplifts reported

- Complex initial setup

Expert Take

Pendo Predict is recognized for its advanced AI-powered capabilities in churn prediction, offering deep insights into customer behavior. It integrates seamlessly with existing platforms, providing valuable analytics that enhance customer retention. Despite its complexity and cost for smaller businesses, it remains a top choice for enterprises seeking to reduce churn.

Statsig's Retention Cohort Analysis software is a cutting-edge tool designed specifically to help retention teams segment loyal and fickle users, thereby unlocking valuable insights into user behavior. The platform provides powerful strategies to boost engagement and reduce churn, addressing the key needs of businesses seeking to maximize customer retention.

Best for Cohort Analytics Tools for Retention Teams

Expert Take

Statsig Retention Cohort Analysis excels in providing retention teams with the ability to segment and analyze user behavior effectively. The platform's real-time insights and data visualization tools are well-documented, contributing to its strong usability and product capability. However, the lack of explicit pricing and limited integrations are notable tradeoffs.

Pros

- Unlimited free feature flags

- Generous free tier (2M events)

- Native integration of experiments & retention

- Warehouse-native deployment option

- Trusted by OpenAI and Notion

Cons

- Lifecycle analysis is 'Coming Soon'

- Limited funnel exploration depth

- Session replays capped at 1MB

- Newer ecosystem than Amplitude

- Less specialized for pure analytics

Best for teams that are

- Engineering-led product teams combining analytics with A/B testing

- Companies using feature flags to measure rollout impact on retention

- Teams wanting to tie cohort performance directly to experiment results

Skip if

- Marketing teams needing simple visual analytics without engineering context

- Organizations not utilizing feature flags or experimentation workflows

- Small teams wanting a basic, standalone dashboard for traffic stats

Best for teams that are

- Engineering-led product teams combining analytics with A/B testing

- Companies using feature flags to measure rollout impact on retention

- Teams wanting to tie cohort performance directly to experiment results

Skip if

- Marketing teams needing simple visual analytics without engineering context

- Organizations not utilizing feature flags or experimentation workflows

- Small teams wanting a basic, standalone dashboard for traffic stats

Pros

- Unlimited free feature flags

- Generous free tier (2M events)

- Native integration of experiments & retention

- Warehouse-native deployment option

- Trusted by OpenAI and Notion

Cons

- Lifecycle analysis is 'Coming Soon'

- Limited funnel exploration depth

- Session replays capped at 1MB

- Newer ecosystem than Amplitude

- Less specialized for pure analytics

Expert Take

Statsig Retention Cohort Analysis excels in providing retention teams with the ability to segment and analyze user behavior effectively. The platform's real-time insights and data visualization tools are well-documented, contributing to its strong usability and product capability. However, the lack of explicit pricing and limited integrations are notable tradeoffs.

ThoughtSpot's Customer Analytics is a powerful tool for growth teams, transforming raw data into actionable insights. It enables businesses to improve customer experience and drive retention by understanding customer behavior and trends, which is crucial in the highly competitive market.

Best for Customer Analytics Tools for Growth Teams

Expert Take

ThoughtSpot Customer Analytics excels in providing growth teams with actionable insights through its robust analytics capabilities. Its real-time data processing and effective customer segmentation support data-driven decision-making. The platform's scalability and market credibility further establish it as a top choice in customer analytics tools.

Pros

- AI-powered natural language search

- Gartner Magic Quadrant Leader 2025

- Strong embedded analytics SDK

- SOC 2, ISO 27001 & HIPAA compliant

- Scalable cloud-native architecture

Cons

- High entry price ($15k/year min)

- Steep learning curve for data modeling

- Limited visualization customization options

- Performance lag with massive datasets

- Opaque consumption-based pricing

Best for teams that are

- Non-technical business users who want to query data using natural language

- Companies with a modern cloud data stack (e.g., Snowflake, Databricks)

- Organizations wanting to democratize data access beyond data teams

Skip if

- Small teams without a centralized, clean data warehouse infrastructure

- Users preferring traditional, static pre-built dashboards over search

- Companies with messy or unstructured data that isn't ready for querying

Best for teams that are

- Non-technical business users who want to query data using natural language

- Companies with a modern cloud data stack (e.g., Snowflake, Databricks)

- Organizations wanting to democratize data access beyond data teams

Skip if

- Small teams without a centralized, clean data warehouse infrastructure

- Users preferring traditional, static pre-built dashboards over search

- Companies with messy or unstructured data that isn't ready for querying

Pros

- AI-powered natural language search

- Gartner Magic Quadrant Leader 2025

- Strong embedded analytics SDK

- SOC 2, ISO 27001 & HIPAA compliant

- Scalable cloud-native architecture

Cons

- High entry price ($15k/year min)

- Steep learning curve for data modeling

- Limited visualization customization options

- Performance lag with massive datasets

- Opaque consumption-based pricing

Expert Take

ThoughtSpot Customer Analytics excels in providing growth teams with actionable insights through its robust analytics capabilities. Its real-time data processing and effective customer segmentation support data-driven decision-making. The platform's scalability and market credibility further establish it as a top choice in customer analytics tools.

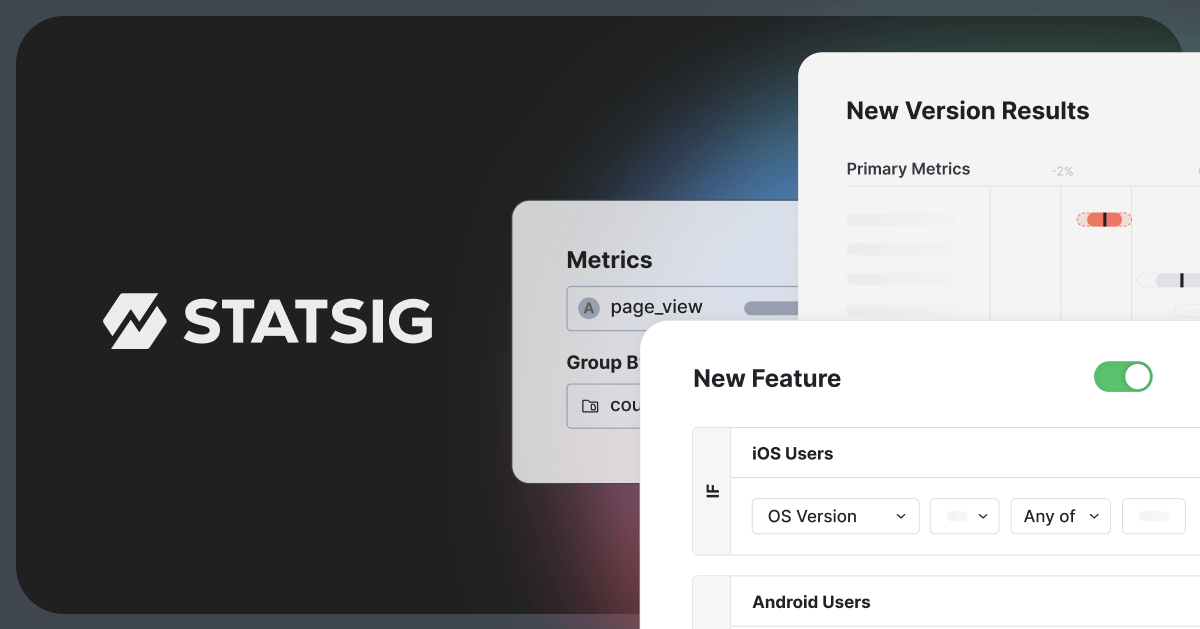

Hex Cohort Analysis is an industry-specific solution for retention teams, providing tools for tracking and analyzing the behavior of customer cohorts. It addresses the need for precise, data-driven insights in customer & cohort analytics platforms, focusing on the unique patterns of customers who share common experiences.

Best for Cohort Analytics Tools for Retention Teams

Expert Take

Hex Cohort Analysis excels in providing retention teams with precise, data-driven insights into customer behavior through its cohort analytics tools. Its user-friendly interface and customizable features make it a valuable asset for businesses aiming to enhance customer engagement. Despite limited pricing transparency, its capabilities and market credibility position it as a best-of-the-best solution.

Pros

- Seamless SQL and Python interoperability

- HIPAA-compliant multi-tenant option

- Deep integration with dbt and Snowflake

- Interactive apps for non-technical stakeholders

- High-accuracy 'Magic' AI assistance

Cons

- Limited native chart customization

- Performance lag in large notebooks

- Usage-based compute costs can scale

- Steeper learning curve for non-coders

- Less suitable for pixel-perfect reporting

Best for teams that are

- Data teams using SQL/Python to build custom retention models

- Analysts wanting to turn code-based analysis into interactive apps for stakeholders

- Teams needing flexible, notebook-style data exploration over rigid dashboards

Skip if

- Non-technical users seeking an out-of-the-box, no-code analytics solution

- Teams needing simple auto-capture event tracking without data engineering

- Marketers looking for standard pre-built web traffic reports

Best for teams that are

- Data teams using SQL/Python to build custom retention models

- Analysts wanting to turn code-based analysis into interactive apps for stakeholders

- Teams needing flexible, notebook-style data exploration over rigid dashboards

Skip if

- Non-technical users seeking an out-of-the-box, no-code analytics solution

- Teams needing simple auto-capture event tracking without data engineering

- Marketers looking for standard pre-built web traffic reports

Pros

- Seamless SQL and Python interoperability

- HIPAA-compliant multi-tenant option

- Deep integration with dbt and Snowflake

- Interactive apps for non-technical stakeholders

- High-accuracy 'Magic' AI assistance

Cons

- Limited native chart customization

- Performance lag in large notebooks

- Usage-based compute costs can scale

- Steeper learning curve for non-coders

- Less suitable for pixel-perfect reporting

Expert Take

Hex Cohort Analysis excels in providing retention teams with precise, data-driven insights into customer behavior through its cohort analytics tools. Its user-friendly interface and customizable features make it a valuable asset for businesses aiming to enhance customer engagement. Despite limited pricing transparency, its capabilities and market credibility position it as a best-of-the-best solution.

Usermaven is a comprehensive analytics platform designed specifically for growth teams looking to leverage data-driven insights to fuel their strategies. Its real-time reporting, precise attribution, and intuitive interface make it ideal for teams that need to understand customer behavior, track performance metrics, and make quick, informed decisions.

Best for Customer Analytics Tools for Growth Teams

Expert Take

Usermaven excels as a customer analytics tool with its real-time insights and precise attribution capabilities, tailored for growth teams. Its user-friendly interface and robust reporting are well-documented, supporting its premium positioning. While pricing transparency is limited to custom quotes, its feature depth justifies its value.

Pros

- Privacy-first cookie-less tracking (GDPR compliant)

- Bypasses ad-blockers via white-labeling

- Unifies website and product analytics

- Auto-capture events without coding

- Generous free tier for startups

Cons

- Limited e-commerce analytics depth

- Report customization can be restrictive

- Documentation lacks advanced use cases

- Fewer integrations than mature rivals

- Trends tool locked to premium plans

Best for teams that are

- Growth teams wanting a privacy-friendly, easy-to-use GA4 alternative

- Marketers needing auto-capture and attribution without coding skills

- SaaS companies requiring both web and product analytics in one view

Skip if

- Enterprises requiring complex SQL querying or raw data access

- Teams needing deep predictive modeling or data science capabilities

- Large organizations with highly complex custom data governance needs

Best for teams that are

- Growth teams wanting a privacy-friendly, easy-to-use GA4 alternative

- Marketers needing auto-capture and attribution without coding skills

- SaaS companies requiring both web and product analytics in one view

Skip if

- Enterprises requiring complex SQL querying or raw data access

- Teams needing deep predictive modeling or data science capabilities

- Large organizations with highly complex custom data governance needs

Pros

- Privacy-first cookie-less tracking (GDPR compliant)

- Bypasses ad-blockers via white-labeling

- Unifies website and product analytics

- Auto-capture events without coding

- Generous free tier for startups

Cons

- Limited e-commerce analytics depth

- Report customization can be restrictive

- Documentation lacks advanced use cases

- Fewer integrations than mature rivals

- Trends tool locked to premium plans

Expert Take

Usermaven excels as a customer analytics tool with its real-time insights and precise attribution capabilities, tailored for growth teams. Its user-friendly interface and robust reporting are well-documented, supporting its premium positioning. While pricing transparency is limited to custom quotes, its feature depth justifies its value.

Gainsight's software is specifically tailored to the needs of businesses seeking to enhance customer retention. It provides advanced customer churn analysis, a critical element in understanding customer behavior and mitigating losses. This SaaS solution offers predictive analytics, enabling proactive measures against potential churn and promoting customer satisfaction and loyalty.

Best for Churn Analytics Tools

Expert Take

Gainsight Customer Churn Analytics is recognized for its advanced capabilities in predictive analytics and customer retention strategies. The product is well-regarded in the industry for its ability to provide actionable insights and customizable dashboards, making it a top choice for businesses aiming to reduce churn and enhance customer loyalty.

Pros

- Advanced AI churn prediction (Horizon/Staircase)

- Deeply customizable health scoring models

- Market-leading enterprise credibility

- Extensive integration ecosystem (Salesforce, S3)

- Robust 'Customer 360' unified view

Cons

- Steep learning curve for new users

- High cost and opaque pricing

- Long implementation timelines

- Requires dedicated admin resources

- Overkill for small/mid-sized teams

Best for teams that are

- Large enterprises with high-touch Customer Success models

- Teams needing deep, complex integrations with Salesforce

- Organizations requiring a comprehensive CS platform beyond just analytics

Skip if

- Early-stage startups or small teams (high complexity and cost)

- Companies looking for a simple, plug-and-play tool

- Low-touch B2C models where individual account management is rare

Best for teams that are

- Large enterprises with high-touch Customer Success models

- Teams needing deep, complex integrations with Salesforce

- Organizations requiring a comprehensive CS platform beyond just analytics

Skip if

- Early-stage startups or small teams (high complexity and cost)

- Companies looking for a simple, plug-and-play tool

- Low-touch B2C models where individual account management is rare

Pros

- Advanced AI churn prediction (Horizon/Staircase)

- Deeply customizable health scoring models

- Market-leading enterprise credibility

- Extensive integration ecosystem (Salesforce, S3)

- Robust 'Customer 360' unified view

Cons

- Steep learning curve for new users

- High cost and opaque pricing

- Long implementation timelines

- Requires dedicated admin resources

- Overkill for small/mid-sized teams

Expert Take

Gainsight Customer Churn Analytics is recognized for its advanced capabilities in predictive analytics and customer retention strategies. The product is well-regarded in the industry for its ability to provide actionable insights and customizable dashboards, making it a top choice for businesses aiming to reduce churn and enhance customer loyalty.

Mailchimp's customer segmentation tool is a must-have for marketing professionals seeking to optimize their campaign strategies. It provides in-depth customer analytics and segmentation capabilities, allowing for targeted communication and higher open rates. Its platform is specifically designed to handle the dynamic needs of modern marketing campaigns.

Best for Customer Analytics Tools with Segmentation

Expert Take

Mailchimp Customer Segmentation stands out for its robust analytics and user-friendly interface, making it a top choice for marketing professionals. Its integration capabilities and customizable segmentation features further enhance its value, although its email-centric focus may limit broader application.

Pros

- Advanced builder supports unlimited nested conditions

- Predictive analytics for customer lifetime value

- Seamless integration with 300+ external apps

- Intuitive pre-built segments for quick targeting

- Deep e-commerce data syncing for behavioral triggers

Cons

- Basic plan limited to only 5 conditions

- Charged for unsubscribed and cleaned contacts

- Advanced segments take hours to update data

- Support response times can be slow

- Complex segments may experience generation lag

Best for teams that are

- SMBs and e-commerce stores already using Mailchimp for email marketing

- Marketers needing simple segmentation based on campaign engagement and purchase history

- Users wanting an easy, non-technical way to target emails without complex infrastructure

Skip if

- Enterprises requiring complex, multi-condition logic beyond basic limits

- Teams needing advanced cross-platform identity resolution or anonymous user tracking

- Businesses not using Mailchimp as their primary marketing platform

Best for teams that are

- SMBs and e-commerce stores already using Mailchimp for email marketing

- Marketers needing simple segmentation based on campaign engagement and purchase history

- Users wanting an easy, non-technical way to target emails without complex infrastructure

Skip if

- Enterprises requiring complex, multi-condition logic beyond basic limits

- Teams needing advanced cross-platform identity resolution or anonymous user tracking

- Businesses not using Mailchimp as their primary marketing platform

Pros

- Advanced builder supports unlimited nested conditions

- Predictive analytics for customer lifetime value

- Seamless integration with 300+ external apps

- Intuitive pre-built segments for quick targeting

- Deep e-commerce data syncing for behavioral triggers

Cons

- Basic plan limited to only 5 conditions

- Charged for unsubscribed and cleaned contacts

- Advanced segments take hours to update data

- Support response times can be slow

- Complex segments may experience generation lag

Expert Take

Mailchimp Customer Segmentation stands out for its robust analytics and user-friendly interface, making it a top choice for marketing professionals. Its integration capabilities and customizable segmentation features further enhance its value, although its email-centric focus may limit broader application.

DriveTrain offers an advanced cohort analysis tool that is designed to address the needs of retention teams. By grouping customers based on shared traits and tracking their behavior over time, it helps uncover growth opportunities and identify patterns, which are critical for businesses focused on customer retention and loyalty.

Best for Cohort Analytics Tools for Retention Teams

Expert Take

DriveTrain Cohort Analysis excels in providing deep insights into customer behavior, which is crucial for retention teams. Its intuitive interface and data-driven approach make it a valuable tool for developing personalized retention strategies. While it requires initial data gathering and has custom pricing, its capabilities justify its premium positioning.

Pros

- 800+ native integrations for data consolidation

- Plain English formulas for non-finance users

- Real-time cohort analysis and segmentation

- Fast 5.7-month average ROI

- Highly responsive customer support team

Cons

- Temperamental data syncs reported by users

- Steep learning curve for initial setup

- Reliance on support for complex data transforms

- No transparent pricing available online

- Limited self-service data linking options

Best for teams that are

- B2B SaaS Finance and FP&A teams forecasting revenue and churn

- Teams analyzing financial cohorts like Net Dollar Retention (NDR) and LTV

- Companies needing to model future cash flow based on customer cohorts

Skip if

- Product managers seeking behavioral UX insights like clicks or heatmaps

- Teams needing mobile app performance or crash tracking

- Marketers analyzing top-of-funnel website traffic sources

Best for teams that are

- B2B SaaS Finance and FP&A teams forecasting revenue and churn

- Teams analyzing financial cohorts like Net Dollar Retention (NDR) and LTV

- Companies needing to model future cash flow based on customer cohorts

Skip if

- Product managers seeking behavioral UX insights like clicks or heatmaps

- Teams needing mobile app performance or crash tracking

- Marketers analyzing top-of-funnel website traffic sources

Pros

- 800+ native integrations for data consolidation

- Plain English formulas for non-finance users

- Real-time cohort analysis and segmentation

- Fast 5.7-month average ROI

- Highly responsive customer support team

Cons

- Temperamental data syncs reported by users

- Steep learning curve for initial setup

- Reliance on support for complex data transforms

- No transparent pricing available online

- Limited self-service data linking options

Expert Take

DriveTrain Cohort Analysis excels in providing deep insights into customer behavior, which is crucial for retention teams. Its intuitive interface and data-driven approach make it a valuable tool for developing personalized retention strategies. While it requires initial data gathering and has custom pricing, its capabilities justify its premium positioning.

Loading comparison data…