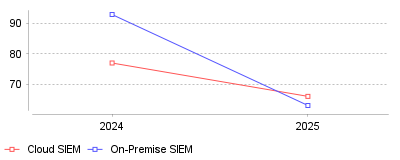

| Year | Cloud SIEM | On-Premise SIEM |

|---|---|---|

| 2024 | 77 | 93 |

| 2025 | 66 | 63 |

Recent market data illustrates a striking inversion in the cost dynamics of Security Information and Event Management (SIEM) platforms, where on-premise deployments are now cheaper per seat than cloud-based solutions. Specifically, while the cost of on-premise SIEM averaged $93 per seat in 2024 compared to $77 for cloud platforms, on-premise costs sharply dropped to just $63 per seat in 2025, falling below the cloud average of $66 [1]. This represents a massive 39% year-over-year cost reduction for legacy infrastructure, highlighting a major shift in pricing strategies [1].

On a macro level, this price inversion suggests that the widely accepted mantra of "cloud is always cheaper" is coming to an end within the security analytics sector [1]. Cloud-based SIEMs are increasingly viewed as premium services designed for organizations prioritizing AI-driven automation and seamless integration with XDR and SOAR platforms [1]. Conversely, on-premise vendors are repositioning themselves as the value choice for businesses grappling with massive volumes of telemetry and stringent data retention requirements. At a micro level, security operations center (SOC) leaders and CISOs now face a polarized purchasing landscape where they must strictly weigh the operational agility of cloud against the raw, high-volume log storage economics of on-premise systems.

This trend is critical because modern enterprises are facing an explosion of telemetry data from multi-cloud environments, edge devices, and remote workforces. As data ingestion volumes skyrocket, organizations utilizing pay-by-ingest or usage-based cloud SIEM models frequently see their budgets unexpectedly quadruple [2]. Furthermore, high computing costs associated with newly integrated Generative AI features in cloud SIEMs are being passed down to end-users, forcing budget-conscious businesses to rethink their infrastructure entirely [1].

The primary driver behind this shift appears to be an aggressive price war initiated by legacy on-premise vendors who are desperate to stem the tide of cloud migration and encourage cloud repatriation [1]. By significantly slashing licensing fees, these traditional players are capitalizing on the growing dissatisfaction with unpredictable cloud consumption billing. Additionally, cloud vendors are investing heavily in processing-intensive AI models and acquiring adjacent capabilities like Attack Surface Management to build unified platforms, which intrinsically raises their operational overhead [1]. Speculatively, we may also be seeing the natural maturation of legacy hardware and software amortization, allowing older vendors to offer rock-bottom pricing while remaining profitable in a specialized niche.

The SIEM market is structurally fracturing into two distinct tiers: a premium cloud tier offering AI automation, and a budget-friendly on-premise tier optimized for massive data storage. Organizations must now conduct rigorous total cost of ownership analyses that account for not just user seats, but projected data ingestion growth and required AI capabilities over the next five years. Ultimately, the prominent takeaway is that for high-volume, budget-conscious log storage, legacy on-premise SIEM has ironically re-emerged as the most cost-effective choice in modern security operations [1].

Cisco finalized its $28 billion cash acquisition of Splunk in March 2024 [1]. The transaction purchased the data provider at $157 per share [2]. This deal united a hardware vendor with a software platform. Consolidation accelerated rapidly following this announcement. Buyers evaluating privacy and compliance software now face a shrinking pool of independent vendors.

Private equity firm Thoma Bravo announced a merger between LogRhythm and Exabeam in May 2024 [3]. The unified company launched two months later under the Exabeam brand [4]. Exabeam previously held a $2.5 billion market valuation [5]. Palo Alto Networks acquired IBM QRadar assets that same month [3]. IBM divested this product to focus on on-premises offerings, redirecting cloud customers to Palo Alto. Choosing security analytics platforms requires navigating these changing ownership structures.

Fewer competitors reduce pricing leverage for technology buyers. Sales representatives push bundled agreements across expanded portfolios. Cisco expects the Splunk acquisition to generate positive cash flow within its first fiscal year [2]. Meanwhile, Palo Alto Networks plans to transition QRadar users to its Cortex platform. Software migrations demand significant engineering hours. Transitioning rulesets between query languages creates operational friction for security personnel.

Global category revenue reached $6.36 billion in 2024 [6]. IMARC Group projects total spending will climb to $15.05 billion by 2033, reflecting a 9.54% growth rate [6]. SkyQuest calculates a steeper expansion. They estimate 2024 market size at $8.33 billion with a 16.8% expansion rate reaching $33.69 billion by 2033 [7]. Demand stems from increased attack surfaces and strict mandates. Capital expenditures rise continuously as networks expand.

Volume metrics explain this financial inflation. Organizations ingest a median of 3.7 terabytes of telemetry daily [8]. Legacy pricing penalizes this growth by charging customers per gigabyte. Splunk Enterprise deployments cost approximately $150 per gigabyte ingested daily [9]. Total first-year expenses for organizations managing substantial volumes range from $400,000 to $800,000 [9]. Cloud subscriptions run roughly 33% higher than on-premises equivalents [9].

Alternative billing structures attempt to disrupt these legacy models. Microsoft Sentinel charges $5.22 per gigabyte under a consumption framework [9]. Free ingestion for native Microsoft sources alters the baseline calculation for Azure-centric organizations. Migrating from physical servers to Sentinel reduces total ownership costs by 44% over three years [10]. Financial executives force chief officers to justify escalating budgets.

Security teams implement data sampling to control ingestion costs. Administrators reduce retention periods or selectively filter logs. Dropping telemetry creates visibility gaps during incident investigations. Attackers exploit these blind spots to maintain persistence without generating indexed alerts. Cost constraints directly degrade threat detection efficacy.

Alert volume overwhelms human capacity. Operations centers receive thousands of automated notifications daily. Seventy-three percent of organizations identify false positives as their primary detection challenge in 2025 [11]. This metric represents a sharp increase from previous reporting periods. "Very frequent" occurrences jumped from 13% to 20% year-over-year [11].

Investigating a single anomaly consumes 15 to 30 minutes of analyst time [11]. Processing 1,000 daily alerts with a 50% failure rate burns hundreds of labor hours. Detection engineering fails to outpace background noise. Analysts suffer acute fatigue from chasing benign anomalies. Industry turnover reached 28% annually [11]. Average employment tenure shrank below 18 months in high-stress environments [11].

Labor shortages compound this localized burnout. The global talent gap reached 4.8 million professionals in 2024 [11]. Organizations cannot simply hire additional analysts to review endless queues. Seventy-one percent of analysts experience severe burnout [11]. Security professionals cite fatigue as the primary driver behind this exhaustion. The investigation model breaks down under these mathematical realities.

Industry regulations dictate specific retention policies. Generic configurations fail to satisfy specialized auditors. System administrators must customize parsing rules to match vertical requirements. Financial penalties force adherence to these strict governance frameworks.

Accounting firms manage sensitive tax records and financial disclosures. Threat detection is critical for maintaining client trust. Using a compliance monitoring system for accountants tracks every database query containing identification numbers. Internal Revenue Service rules mandate specific access controls. Identifying unauthorized file exports prevents reputational damage during tax season.

Insurance brokerages hold vast repositories of health information. State regulators enforce data security laws strictly. Deploying a security analytics tool for insurance agents automates evidence collection for annual audits. These platforms correlate endpoint alerts with cloud logins. Brokers demonstrate regulatory compliance through standardized reporting dashboards.

Creative agencies serve as vulnerable supply chain links to enterprise targets. Threat actors compromise vendor networks to steal authentication tokens. Implementing threat detection systems for advertising teams protects shared campaign assets. Agencies handle unreleased product images and corporate messaging. Securing remote workstations requires unified visibility across diverse hardware environments.

Federal regulators demand rapid public transparency following network breaches. The Securities and Exchange Commission adopted Item 1.05 of Form 8-K on July 26, 2023 [12]. This rule requires public companies to disclose material cyber incidents [13]. Filings must occur within four business days of determining an event meets the materiality threshold [12]. Initial disclosures must describe the nature, scope, and timing of the breach.

Companies cannot delay reporting while waiting for complete forensic details. The commission expects initial filings even when investigations remain active. Extensions exist only if the Attorney General determines immediate disclosure poses a substantial national security risk [13]. Filers must submit an amended form within four business days of discovering new material information [12].

Materiality assessments depend entirely on accurate log data. Legal counsel needs exact timelines showing when threat actors accessed specific servers. Analysts query central databases to calculate the precise number of compromised records. Slow search performance directly jeopardizes regulatory deadlines. Platforms utilizing legacy indexing architectures struggle to return broad queries across petabytes of stored telemetry.

Ransomware payments do not negate these reporting obligations. The SEC Division of Corporation Finance issued updated compliance interpretations on June 24, 2024 [14]. Companies making extortion payments to halt ongoing attacks must still conduct formal materiality assessments [14]. Erasing the operational disruption does not erase the reporting requirement. Financial impacts factor heavily into the materiality calculation.

Network boundaries dissolved during remote work migrations. Traditional firewalls cannot inspect traffic originating from unmanaged personal devices. The human element presents the greatest vulnerability. Threat data shows 80% of breaches involved compromised or misused privileged credentials [15]. Phishing-based identity attacks impacted 69% of organizations in 2024 [15].

State-aligned threat actors escalated the use of adversary-in-the-middle techniques. These attacks hijack legitimate session cookies after users successfully authenticate [15]. Once inside the network, attackers use native administrative tools to navigate lateral pathways. Normal system commands evade standard malware signatures. Analytics platforms must correlate seemingly routine user actions across multiple applications to detect these covert intrusions.

Performance indicators reflect the health of security operations. Mean Time to Detect measures how quickly software identifies potential incidents. Mean Time to Respond calculates the duration required to contain identified threats. High signal ratios indicate alerts carry meaningful intelligence. Cloud environments generate 100 times more security data than traditional systems. Organizations process nearly 7,000 alerts to identify a single genuine incident [16]. Average cloud breach detection takes between four and twelve days [16].

Software vendors pitch artificial intelligence as the antidote to human fatigue. Response playbooks attempt to isolate compromised endpoints without analyst intervention. Machine learning models establish baseline behavior profiles. Deviations from these baselines trigger elevated risk scores rather than binary alerts.

Palo Alto Networks anchors its growth strategy on this automated operational model. The company reported $8.0 billion in total revenue for fiscal year 2024 [17]. Recurring revenue grew 32% year-over-year, reaching $5.6 billion in the fourth quarter [18]. Hardware appliance sales constitute a shrinking fraction of corporate income. Software subscriptions drive primary financial expansion.

Market adoption of integrated platforms shows measurable enterprise traction. The Cortex XSIAM product secured approximately 400 enterprise customers by mid-2024 [18]. These accounts generate an average recurring revenue exceeding $1 million each [18]. Buyers display a willingness to replace distinct tools with unified suites. Centralized data processing eliminates the need for fragile API integrations.

Competitors pursue similar consolidation strategies. Identity security firm CyberArk entered a definitive agreement to acquire Venafi for $1.54 billion in May 2024 [5]. Cloud security provider Wiz raised $1 billion at a $12 billion valuation to expand its application protection platform [5]. Buyers seek fewer vendor relationships to reduce procurement complexity.

Transitioning from legacy systems requires substantial capital expenditure. Chief officers must justify software migrations to corporate boards. Training junior analysts on proprietary query languages stalls operational velocity during deployment phases. Log data often remains siloed in storage arrays for years to satisfy compliance mandates.

Data lake architectures offer a technical compromise. Separating storage infrastructure from query compute engines reduces retention costs. Organizations dump raw telemetry into cloud object storage. Analytical engines pull data into active memory only when specific investigations require historical context. This decoupling fundamentally changes the economics of security monitoring.

Future market leadership depends on improving the signal-to-noise ratio. Security platforms must contextualize alerts using identity logs, cloud posture data, and endpoint telemetry simultaneously. Software that merely aggregates logs into a central interface adds zero operational value. Algorithms must accurately prioritize threats to reverse the rising tide of analyst burnout.

Legacy indexing databases constrain modern security operations. Traditional platforms structure data upon ingestion to accelerate future searches. This schema approach requires immense computing power during the initial collection phase. Ingesting unpredictable cloud telemetry breaks these formatting rules. Processing delays create unacceptable latency between malicious actions and alert generation.

Modern data lakes embrace a schema-on-read architecture. Security tools write unformatted logs directly into cloud storage buckets. The platform applies structural formatting only when analysts execute specific queries. This fundamental architectural difference allows organizations to store years of network activity cost-effectively.

Decoupling storage from computing power solves the pricing dilemma. Security operations centers no longer pay premium analytics rates to store compliance data they rarely query. Compute clusters remain dormant until an active incident requires investigation. Analysts spin up massive processing power temporarily to execute complex threat hunts across petabytes of stored logs. Billing strictly measures the computing resources consumed during active queries.

Automation features rely on these deep historical repositories. Training effective machine learning models requires massive datasets to establish accurate behavioral baselines. Truncated retention periods cripple algorithmic accuracy. Providing unlimited historical storage enables predictive scoring systems to recognize subtle deviations that indicate advanced persistent threats.

Table formats accelerate this transition away from proprietary databases. Apache Iceberg and Delta Lake standards allow multiple analytical tools to query the same data repository. Security teams avoid vendor lock-in by retaining control over their storage buckets. If a primary analytics tool becomes too expensive, organizations simply connect a different compute engine to their existing data lake.

Purchasing a new analytics platform solves only the architectural constraints. Operationalizing the software requires extensive engineering. Detection rules generate excessive noise in unique corporate networks. Detection engineers must manually tune algorithmic thresholds to account for legitimate business operations.

Integration challenges plague platform migrations. Connecting internal applications to a centralized security tool requires custom API development. Mainframes lack modern logging capabilities entirely. Security teams must deploy lightweight forwarding agents across thousands of distributed servers to collect operating system telemetry. Administrators frequently block these deployments due to CPU performance concerns.

Capabilities demand mature internal processes. Response playbooks can execute disastrous commands if triggered incorrectly. A false positive could prompt the platform to isolate a database server automatically. This sudden quarantine would cause a production outage. Operations centers must establish strict governance protocols before enabling automated remediation features.