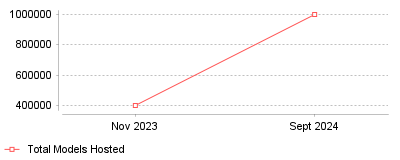

| Date | Total Models Hosted |

|---|---|

| Nov 2023 | 400000 |

| Sept 2024 | 1000000 |

Recent data indicates a bifurcation in the "Source Code Hosting" category, where the definition of a repository is expanding to include massive AI model weights alongside traditional code. While GitHub reported a 98% year-over-year increase in global Generative AI projects in 2024 [1], the dedicated AI platform Hugging Face saw its hosted models skyrocket from approximately 400,000 in late 2023 to over 1 million by September 2024 [2][3]. This represents an exponential acceleration in the storage and sharing of pre-trained intelligence, outpacing the growth rate of traditional application logic.

On a micro level, this trend signifies that the modern developer's toolkit has fundamentally changed; developers are now "assembling" AI applications using pre-made models rather than writing all logic from scratch. This is further evidenced by Python overtaking JavaScript as the most popular language on GitHub for the first time in history, driven largely by data science and AI activity [4]. On a macro industry level, Hugging Face is cementing its status as the "GitHub of AI," creating a dual-platform ecosystem where GitHub holds the application code (the "body") and Hugging Face holds the weights (the "brain"). This shift suggests that the value in open-source software is migrating toward the data and model weights, necessitating new infrastructure capable of handling massive binary files rather than just text-based source code.

This trend is critical because it redefines software supply chain security and infrastructure requirements. As organizations integrate open-source models (now numbering over a million) into their codebases, they face new risks related to "pickled" model files and unverified weights, distinct from traditional code vulnerabilities [5]. Furthermore, the sheer volume of AI projects—with GitHub noting a 59% surge in contributions to GenAI projects in 2024 alone—indicates that AI competence is no longer a niche skill but a baseline requirement for the global developer workforce [1].

The primary driver is likely the democratization of "open-weight" models (such as Llama 3 and Mistral) which empowered individual developers to fine-tune and upload their own variants without needing the resources to train a model from scratch. Additionally, the release of tools like LoRA (Low-Rank Adaptation) drastically reduced the file size and compute cost required to customize models, fueling the explosion in model counts on Hugging Face. The 98% surge on GitHub also correlates with the mainstream adoption of AI coding assistants (like Copilot), which lower the barrier to entry for coding, allowing more developers to create and contribute to complex AI projects [6].

The "Source Code Hosting" category has officially evolved into an "Asset Hosting" ecosystem. The prominent takeaway is that for the next decade of development, proficiency in managing model repositories will be just as vital as managing Git workflows. Developers and organizations must adapt to a hybrid workflow where code lives on GitHub, but the differentiating intelligence lives on platforms like Hugging Face.

Global IT spending will reach $5.61 trillion in 2025, a 9.8% increase over the previous year, with software and data center systems leading this expansion [1]. Within this massive expenditure, the sector for Source Code Hosting & Repositories is undergoing a forced contraction of providers, leaving CIOs with fewer, more powerful options. The most significant signal of this contraction occurred in July 2024, when Amazon Web Services (AWS) closed new customer access to AWS CodeCommit, effectively deprecating the service for new market entrants [2].

This decision forces a binary choice for many engineering leaders: Microsoft’s GitHub or the independent GitLab. Microsoft revealed in July 2024 that GitHub had reached a $2 billion annual revenue run rate, driven largely by the adoption of its AI tool, Copilot [3]. In contrast, GitLab reported fiscal year 2025 revenue of approximately $759 million [4]. The revenue disparity—GitHub generating nearly triple the revenue of its closest competitor—illustrates a market tipping toward a dominant player, creating risks regarding pricing power and vendor lock-in.

Operational teams must now treat repository migration not as a one-time project but as a continuous competency. The AWS CodeCommit deprecation caught many infrastructure teams off guard, forcing unplanned migrations to GitHub or GitLab. Engineering directors who previously viewed source control as a static utility must now evaluate the solvency and roadmap of their hosting providers. The risk is no longer just technical; it is existential for the toolchain. If a hyperscaler like AWS can exit the source control market, smaller, niche players face even higher insolvency risks.

Generative AI has fundamentally altered the volume of code entering repositories, but this velocity introduces a hidden tax on maintainability. GitClear analyzed 211 million lines of code changed between 2020 and 2024 and found a disturbing trend: code churn—the percentage of code revised within two weeks of being written—jumped to 7.9% in 2024, compared to 5.5% in 2020 [5]. This rise in churn indicates that while developers generate syntax faster, the initial quality is lower, necessitating immediate rework.

Operational leaders at source code hosting & repos for SaaS companies face a specific challenge: the rapid accumulation of technical debt disguised as feature velocity. The same study revealed that the incidence of "copy/pasted" code surged from 8.3% to 12.3% over the same period, while code refactoring declined [6]. This data suggests that AI assistants encourage a "fire and forget" mentality, where developers accept suggested blocks without understanding the underlying logic or checking for duplication.

The financial impact of this trend is measurable in review cycles. If 63% of professional developers now use AI in their workflow [7], and the defect rate is rising, senior engineers must spend disproportionately more time reviewing junior or AI-generated pull requests. A Google DORA analysis estimated a 7.2% decrease in delivery stability for every 25% increase in AI adoption [5]. For a generic engineering team of 50 developers with an average salary of $150,000, a 7% loss in stability and subsequent remediation time equates to a $525,000 annual efficiency loss, negating the theoretical speed gains of the AI tools.

January 17, 2025, marked a definitive shift in the regulatory environment for European financial institutions and their technology suppliers. The Digital Operational Resilience Act (DORA) moved from a preparation phase to full enforcement [8]. Unlike previous guidelines that focused on data privacy (GDPR), DORA mandates operational resilience, specifically requiring financial entities to conduct advanced threat-led penetration testing and manage third-party ICT risks.

This regulation directly impacts repository management. Financial institutions must now maintain a register of information regarding their use of third-party ICT service providers, including source code hosting platforms. If a bank uses a SaaS-based repository, that provider falls under the scrutiny of European Supervisory Authorities. Operational teams must implement business intelligence & analytics software to track and report on the resilience of these dependencies. The regulation forces a transition from passive vendor management to active auditing of the software supply chain.

The NIS2 directive further expands these requirements beyond finance to critical sectors like energy, transport, and health. NIS2 requires organizations to address supply chain security, including vulnerability handling and disclosure [9]. For repository administrators, this means that "security by obscurity" is legally indefensible. You must prove that your source code hosting environment has automated scanning, access controls, and a tested disaster recovery plan. Failure to comply can result in administrative fines and, under NIS2, personal liability for senior management.

Security failures in the repository layer are growing in both frequency and severity. Cybersecurity Ventures projects the global annual cost of software supply chain attacks will reach $60 billion in 2025 [10]. This figure represents a 30% increase from 2023, driven by attackers shifting focus from hardening production environments to softer development pipelines. The repository is the new endpoint.

Malicious packages are the primary vector. Sonatype reported a 156% year-over-year increase in malicious packages within open-source ecosystems in their 2024 analysis [11]. Attackers publish packages with names similar to popular libraries (typosquatting) or hijack abandoned projects to inject malware. When a developer’s build pipeline pulls these compromised dependencies, the malicious code executes immediately inside the company network. This vector bypasses traditional firewalls because the traffic originates from a trusted internal build process requesting data from a public registry.

Hardcoded credentials, or "secrets," remain a persistent vulnerability. GitGuardian detected 23.8 million new hardcoded secrets on public GitHub repositories in 2024, a 25% increase from the previous year [12]. The problem is particularly acute for source code hosting & repos for digital marketing agencies, where developers manage hundreds of client API keys and tokens. A single accidental commit to a public repository can expose dozens of client environments. GitGuardian found that 70% of secrets leaked in 2022 were still active in 2025 [12], indicating that remediation processes are failing. Organizations are detecting leaks but failing to rotate the compromised credentials.

The era of heavily subsidized CI/CD infrastructure is ending. GitHub announced a significant change to its billing model for GitHub Actions, introducing a platform charge for self-hosted runners starting March 1, 2026 (later postponed for re-evaluation due to backlash, but the intent remains clear) [13]. The proposal involved a $0.002 per minute fee for the orchestration of jobs running on customer-owned infrastructure. While GitHub paused the rollout, the move signals that the "control plane" for CI/CD will no longer be free.

This pricing shift targets the arbitrage model used by many mature engineering organizations. Historically, companies reduced costs by using GitHub for orchestration (free) while running the heavy compute workloads on their own cheap AWS Spot Instances or on-premise servers. GitHub's move to monetize the orchestration layer forces companies to recalculate their total cost of ownership. For an organization running 100,000 minutes of self-hosted build time per month, a seemingly small per-minute fee adds substantial unplanned overhead.

Large File Storage (LFS) is another area seeing aggressive monetization. GitHub Enterprise accounts created after June 2, 2024, are subject to metered billing for LFS, moving away from the pre-paid data pack model [14]. Under the new model, storage costs $0.07 per GiB per month, and bandwidth costs $0.0875 per GiB. For gaming studios or ML teams storing terabytes of binary data, this shift eliminates the predictability of flat-rate billing. Teams must now implement strict retention policies and pruning scripts to prevent storage costs from ballooning.

Gartner predicts that by 2026, 80% of software engineering organizations will establish platform engineering teams, up from 45% in 2022 [15]. This shift represents a fundamental change in how repositories are consumed. The repository is no longer just a storage bucket for code; it is becoming the backend database for an Internal Developer Platform (IDP). These platforms abstract the complexity of cloud infrastructure, allowing developers to self-serve environments and deployments via a unified interface.

The next phase of this evolution involves AI agents. Unlike the current generation of "copilots" that suggest code snippets, agents will autonomously perform multi-step tasks such as triaging bugs, writing tests, and executing refactors. Microsoft and GitHub are positioning their platforms to support these "agentic workloads," where the repository acts as the environment for AI agents to operate [16]. This transition will require stricter repository governance. If an AI agent can commit code, the branch protection rules, code owner reviews, and CI/CD gates must be strong enough to prevent automated errors from reaching production.

Organizations that fail to formalize their platform engineering strategy risk drowning in the operational complexity of these new tools. The disparity between elite performers and low performers will widen, defined not by who writes the best code, but by who builds the most resilient platform for their code to live in.