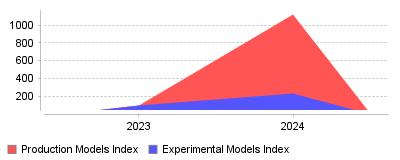

Research into the "AI Model Deployment & MLOps Platforms" category reveals a massive, statistically significant shift from experimental AI to operational deployment over the last year. According to the Databricks "State of Data + AI 2024" report, the number of AI models registered for production surged by 1,018% year-over-year, vastly outpacing the 134% growth in experimental models logged <a href="https://www.databricks.com/discover/state-of-data-ai" rel="nofollow">&x5b;1&x5d;</a> <a href="https://www.databricks.com/blog/state-ai-enterprise-adoption-growth-trends" rel="nofo

| Year | Production Models Index | Experimental Models Index |

|---|---|---|

| 2023 | 100 | 100 |

| 2024 | 1118 | 234 |

The data reveals a dramatic divergence between the volume of AI models being experimented with and those actually reaching production. While experimental activity remains healthy with a 134% increase, the registration of models for production environments has exploded by 1,018% (an 11x increase) in the last recorded year [1] [2]. Additionally, supporting infrastructure for deployment, such as Vector Databases used for RAG (Retrieval Augmented Generation), saw a corresponding 377% year-over-year growth [2].

This trend signifies a fundamental maturity shift in the AI industry, moving from a "training-centric" mindset to an "inference-centric" reality. For years, the industry struggled with "pilot purgatory," where data science teams built models that never left the notebook; now, organizations are successfully integrating AI into downstream applications at scale [3] [1]. In the macro view, this indicates that the massive capital expenditure on GPUs and AI tooling is finally beginning to convert into operational expenditure and tangible product features. For the MLOps sector specifically, it means the primary challenge is shifting from "how do we version data?" to "how do we monitor and govern live GenAI agents?" as inference costs and reliability become the new bottlenecks [4] [5].

This shift is critical because it validates the commercial viability of the current AI boom, proving that enterprises are finding value beyond mere R&D. It also fundamentally changes the requirements for MLOps platforms; tools that strictly focus on model training are losing ground to those that excel in governance, vector retrieval, and live inference monitoring [5]. Furthermore, the 1,018% surge suggests that "shadow AI" is likely increasing, necessitating stricter governance frameworks (like Unity Catalog) to manage the thousands of models suddenly flooding production environments [2].

The primary driver is undoubtedly the adoption of Generative AI and Large Language Models (LLMs), which have a significantly lower barrier to deployment than traditional supervised learning models. Unlike a fraud detection model that requires months of feature engineering, a pre-trained LLM can be "registered" as a model via a simple wrapper or prompt chain, instantly counting as a "production deployment" [6] [3]. Additionally, the rapid maturation of "LLMOps" tools—such as automated evaluation chains and vector stores—has streamlined the path from prototype to product. Finally, intense top-down pressure from the C-suite to show returns on AI investments has likely forced engineering teams to prioritize shipping functional wrappers over endless experimentation [7].

The era of hoarding data and experimenting in isolation is ending; the era of mass AI deployment has arrived. The 11x growth in production models proves that Generative AI has successfully democratized access to ML capabilities, allowing non-specialists to deploy models that would have previously required a PhD to build. The takeaway for platform builders is clear: the market value has migrated from "building models" to "running, monitoring, and governing models" in the wild [1] [8].

The transition of artificial intelligence from experimental laboratories to mission-critical enterprise infrastructure has fundamentally altered the operational landscape of software development. As organizations move beyond pilot programs, the demand for robust AI Model Deployment & MLOps Platforms has surged, driven by the necessity to govern, scale, and monitor increasingly complex models. The market for Machine Learning Operations (MLOps) is witnessing an explosive trajectory, with valuations projected to rise from approximately $4.4 billion in 2024 to over $56 billion by the early 2030s, growing at a Compound Annual Growth Rate (CAGR) exceeding 37% [1], [2].

This rapid expansion reflects a critical maturity point in the industry. While adoption rates are high—with 78% of organizations now using AI in at least one business function—the ability to extract tangible value remains concentrated among a select few. Recent research indicates that only 6% of enterprises qualify as "AI high performers," attributing significant Earnings Before Interest and Taxes (EBIT) impact to their AI deployments [3], [4]. The disparity between adoption and value realization highlights a profound operational gap: the challenge is no longer just building models, but industrializing them through reliable, scalable, and observable pipelines.

For decision-makers navigating the broader ecosystem of AI, Automation & Machine Learning Tools, understanding the nuance of this operational shift is vital. The current landscape is defined by the bifurcation of traditional MLOps (focused on predictive, deterministic models) and the emerging discipline of GenAIOps (focused on generative, probabilistic large language models). This report analyzes the prevailing trends, the operational bottlenecks stifling ROI, and the strategic implications for sectors dependent on high-velocity deployment.

The introduction of Large Language Models (LLMs) has necessitated a re-architecture of deployment platforms. Traditional MLOps was built on the premise of deterministic outcomes where success metrics were clear-cut (e.g., accuracy, precision, recall). However, the rise of Generative AI has introduced non-deterministic outputs, requiring a fundamentally different operational approach known as GenAIOps or LLMOps.

Unlike traditional machine learning which primarily processes structured data, GenAIOps must handle vast quantities of unstructured data such as text, images, and code. This shift complicates the data ingestion and preprocessing layers of the technology stack. Where traditional MLOps relies on automated feature engineering, GenAIOps heavily emphasizes "prompt engineering" and the management of vector databases for Retrieval-Augmented Generation (RAG) [5]. The operational burden shifts from training models from scratch to fine-tuning pre-trained foundation models and managing the retrieval context window to reduce hallucinations [6].

One of the most distinct trends in 2024 and 2025 is the integration of Human-in-the-Loop (HITL) workflows as a standard component of the deployment pipeline. In traditional MLOps, model drift could be detected mathematically. In GenAIOps, "drift" often manifests as a degradation in the helpfulness or safety of the generated content, metrics that are notoriously difficult to automate. Consequently, platforms are increasingly incorporating Reinforcement Learning from Human Feedback (RLHF) to align model behavior with business intent [7]. This requirement creates new operational bottlenecks, as scaling human review processes is significantly more resource-intensive than automated validation.

The economic model of deployment has also shifted. Traditional models are generally lightweight and cheap to run. In contrast, LLMs are computationally expensive, with inference costs often priced per token. This introduces unpredictability in operational expenditure (OpEx). Organizations are finding that the latency requirements for real-time user interaction—such as a customer service chatbot or a dynamic pricing engine—conflict with the massive computational load of modern Transformers [8], [6]. This has led to a surge in demand for platforms that offer advanced model quantization, distillation, and edge deployment capabilities to balance performance with cost.

Despite the enthusiasm for AI adoption, a significant portion of AI initiatives fail to graduate from the proof-of-concept (PoC) phase to full-scale production—a phenomenon often termed "pilot purgatory." Research suggests that nearly two-thirds of organizations have not yet begun scaling AI across the enterprise, stuck instead in isolated experiments [4]. Several systemic operational challenges contribute to this stagnation.

The most pervasive barrier to successful deployment is data readiness. AI models are sensitive to the quality of their input; garbage in results in garbage out. However, many enterprises struggle with data silos, legacy infrastructure, and inconsistent data formats that make creating a unified training or inference pipeline impossible. In the retail sector, for example, disparate inventory and customer data systems often prevent the seamless deployment of personalization models [9]. furthermore, the "diminishing stocks" of high-quality public data for training frontier models is pushing organizations toward synthetic data, which carries its own risks of model collapse if not managed correctly [10].

Modern AI models must often operate within IT environments that were designed decades ago. Integrating a state-of-the-art predictive model into a legacy mainframe or an on-premise ERP system presents massive technical friction. This incompatibility leads to operational disruptions and high technical debt. For many organizations, the cost of refactoring legacy systems to accept API calls from an MLOps platform exceeds the projected immediate value of the AI model itself [11], [12].

The rapid evolution of the technology has outpaced the workforce's ability to upskill. There is a critical shortage of engineers capable of managing the full MLOps lifecycle—from data engineering to model monitoring. This gap is particularly acute in "hybrid" roles that require both domain expertise (e.g., healthcare, finance) and technical AI proficiency. Organizations report that the lack of in-house expertise is a primary reason for deployment delays, forcing reliance on external vendors or "black box" solutions that they cannot fully control or explain [13], [12].

As AI systems become autonomous agents capable of executing tasks, the risks associated with deployment escalate. Regulatory frameworks like the EU AI Act and GDPR impose strict requirements on explainability and data privacy. Operationalizing these compliance requirements is difficult. MLOps platforms must now provide robust audit trails, version control for data (not just code), and automated bias detection. In sectors like finance and healthcare, the "black box" nature of deep learning models remains a significant hurdle to deployment, as stakeholders must be able to explain why a model made a specific decision [14], [15].

While the challenges of MLOps are universal, their manifestation varies significantly across industries. The operational pressures in ecommerce differ vastly from those in marketing agencies or manufacturing.

For online retailers, the deployment of AI is directly tied to conversion rates. Recommender systems, dynamic pricing engines, and visual search tools operate in a high-stakes, real-time environment. A delay of mere milliseconds in serving a model prediction can result in cart abandonment. Consequently, AI Model Deployment & MLOps Platforms for Ecommerce Brands prioritize low-latency inference and high availability. These platforms must handle massive spikes in traffic during peak seasons (e.g., Black Friday) without degradation in performance. Furthermore, the integration of visual search—allowing users to upload images to find products—requires specialized pipelines for computer vision that are distinct from standard tabular data models [16], [17].

In the marketing domain, the focus shifts from latency to creativity and content velocity. Agencies are leveraging Generative AI to produce copy, images, and video assets at scale. The operational challenge here is managing the "brand voice" and ensuring consistency across thousands of generated assets. AI Model Deployment & MLOps Platforms for Marketing Agencies are evolving to include sophisticated prompt management libraries and style-guide enforcement mechanisms. Unlike ecommerce, where the output is a product ID or price, marketing outputs are subjective. Therefore, these platforms often feature robust approval workflows that allow human creative directors to review and iterate on AI-generated content before it goes live [18].

Looking toward 2025 and beyond, the industry is poised for a shift from passive "advisory" AI to active "agentic" AI. While current MLOps practices focus on deploying models that answer questions or make predictions, the next wave involves deploying autonomous agents that can plan and execute complex workflows [3].

Agentic AI systems can autonomously interact with other software, APIs, and environments to complete multi-step tasks (e.g., "Plan a travel itinerary and book the flights"). This introduces exponential complexity to MLOps. Deployment platforms must now support "tool use" capabilities, allowing models to securely authenticate and interact with external systems. Monitoring becomes infinitely more complex; operators need to track not just the output of a model, but the sequence of actions taken by an agent and the potential side effects of those actions on business systems [4], [19].

As the cost of cloud inference remains high and privacy concerns grow, there is a trend toward pushing model execution to the edge. The Edge AI market is projected to grow significantly, reaching over $66 billion by 2030 [20]. This requires MLOps platforms to support "hybrid" deployment architectures, where a large foundation model might reside in the cloud for complex reasoning, while smaller, distilled models run locally on user devices for speed and privacy. Orchestrating version control and monitoring across this distributed infrastructure is a key frontier for platform innovation.

The MLOps market is currently fragmented, with hundreds of point solutions addressing specific slices of the stack (e.g., only feature stores, only monitoring, only training). However, a consolidation wave is underway. Enterprises prefer end-to-end platforms that provide a unified control plane for the entire lifecycle. We are seeing a convergence where major cloud providers and specialized MLOps unicorns are acquiring smaller players to offer comprehensive "AI Operating Systems" that integrate data prep, training, deployment, and governance [21], [20].

The successful industrialization of AI requires more than just purchasing the right software. It demands a holistic operational strategy that aligns technology with business outcomes.

The era of experimentation is ending. The organizations that will dominate the next decade are those that treat AI model deployment not as a science project, but as a core engineering discipline. By leveraging robust MLOps platforms and addressing the operational hurdles of data, talent, and governance, businesses can bridge the gap between AI potential and profitable reality.